Isabelle Boutron, Matthew J Page, Julian PT Higgins, Douglas G Altman, Andreas Lundh, Asbjørn Hróbjartsson; on behalf of the Cochrane Bias Methods Group

Key Points:

- Review authors should seek to minimize bias. We draw a distinction between two places in which bias should be considered. The first is in the results of the individual studies included in a systematic review. The second is in the result of the meta-analysis (or other synthesis) of findings from the included studies.

- Problems with the design and execution of individual studies of healthcare interventions raise questions about the internal validity of their findings; empirical evidence provides support for this concern.

- An assessment of the internal validity of studies included in a Cochrane Review should emphasize the risk of bias in their results, that is, the risk that they will over-estimate or under-estimate the true intervention effect.

- Results of meta-analyses (or other syntheses) across studies may additionally be affected by bias due to the absence of results from studies that should have been included in the synthesis.

- Review authors should consider source of funding and conflicts of interest of authors of the study, which may inform the exploration of directness and heterogeneity of study results, assessment of risk of bias within studies, and assessment of risk of bias in syntheses owing to missing results.

Cite this chapter as: Boutron I, Page MJ, Higgins JPT, Altman DG, Lundh A, Hróbjartsson A. Chapter 7: Considering bias and conflicts of interest among the included studies. In: Higgins JPT, Thomas J, Chandler J, Cumpston M, Li T, Page MJ, Welch VA (editors). Cochrane Handbook for Systematic Reviews of Interventions version 6.0 (updated July 2019). Cochrane, 2019. Available from www.training.cochrane.org/handbook.

7.1 Introduction

Cochrane Reviews seek to minimize bias. We define bias as a systematic error, or deviation from the truth, in results. Biases can lead to under-estimation or over-estimation of the true intervention effect and can vary in magnitude: some are small (and trivial compared with the observed effect) and some are substantial (so that an apparent finding may be due entirely to bias). A source of bias may even vary in direction across studies. For example, bias due to a particular design flaw such as lack of allocation sequence concealment may lead to under-estimation of an effect in one study but over-estimation in another (Jüni et al 2001).

Bias can arise because of the actions of primary study investigators or because of the actions of review authors, or may be unavoidable due to constraints on how research can be undertaken in practice. Actions of authors can, in turn, be influenced by conflicts of interest. In this chapter we introduce issues of bias in the context of a Cochrane Review, covering both biases in the results of included studies and biases in the results of a synthesis. We introduce the general principles of assessing the risk that bias may be present, as well as the presentation of such assessments and their incorporation into analyses. Finally, we address how source of funding and conflicts of interest of study authors may impact on study design, conduct and reporting. Conflicts of interest held by review authors are also of concern; these should be addressed using editorial procedures and are not covered by this chapter (see Chapter 1, Section 1.3).

We draw a distinction between two places in which bias should be considered. The first is in the results of the individual studies included in a systematic review. Since the conclusions drawn in a review depend on the results of the included studies, if these results are biased, then a meta-analysis of the studies will produce a misleading conclusion. Therefore, review authors should systematically take into account risk of bias in results of included studies when interpreting the results of their review.

The second place in which bias should be considered is the result of the meta-analysis (or other synthesis) of findings from the included studies. This result will be affected by biases in the included studies, and may additionally be affected by bias due to the absence of results from studies that should have been included in the synthesis. Specifically, the conclusions of the review may be compromised when decisions about how, when and where to report results of eligible studies are influenced by the nature and direction of the results. This is the problem of ‘non-reporting bias’ (also described as ‘publication bias’ and ‘selective reporting bias’). There is convincing evidence that results that are statistically non-significant and unfavourable to the experimental intervention are less likely to be published than statistically significant results, and hence are less easily identified by systematic reviews (see Section 7.2.3). This leads to results being missing systematically from syntheses, which can lead to syntheses over-estimating or under-estimating the effects of an intervention. For this reason, the assessment of risk of bias due to missing results is another essential component of a Cochrane Review.

Both the risk of bias in included studies and risk of bias due to missing results may be influenced by conflicts of interest of study investigators or funders. For example, investigators with a financial interest in showing that a particular drug works may exclude participants who did not respond favourably to the drug from the analysis, or fail to report unfavourable results of the drug in a manuscript.

Further discussion of assessing risk of bias in the results of an individual randomized trial is available in Chapter 8, and of a non-randomized study in Chapter 25. Further discussion of assessing risk of bias due to missing results is available in Chapter 13.

7.1.1 Why consider risk of bias?

There is good empirical evidence that particular features of the design, conduct and analysis of randomized trials lead to bias on average, and that some results of randomized trials are suppressed from dissemination because of their nature. However, it is usually impossible to know to what extent biases have affected the results of a particular study or analysis (Savović et al 2012). For these reasons, it is more appropriate to consider whether a result is at risk of bias rather than claiming with certainty that it is biased. Most recent tools for assessing the internal validity of findings from quantitative studies in health now focus on risk of bias, whereas previous tools targeted the broader notion of ‘methodological quality’ (see also Section 7.1.2).

Bias should not be confused with imprecision. Bias refers to systematic error, meaning that multiple replications of the same study would reach the wrong answer on average. Imprecision refers to random error, meaning that multiple replications of the same study will produce different effect estimates because of sampling variation, but would give the right answer on average. Precision depends on the number of participants and (for dichotomous outcomes) the number of events in a study, and is reflected in the confidence interval around the intervention effect estimate from each study. The results of smaller studies are subject to greater sampling variation and hence are less precise. A small trial may be at low risk of bias yet its result may be estimated very imprecisely, with a wide confidence interval. Conversely, the results of a large trial may be precise (narrow confidence interval) but also at a high risk of bias.

Bias should also not be confused with the external validity of a study, that is, the extent to which the results of a study can be generalized to other populations and settings. For example, a study may enrol participants who are not representative of the population who most commonly experience a particular clinical condition. The results of this study may have limited generalizability to the wider population, but will not necessarily give a biased estimate of the effect in the highly specific population on which it is based. Factors influencing the applicability of an included study to the review question are covered in Chapter 14 and Chapter 15.

7.1.2 From quality scales to domain-based tools

Critical assessment of included studies has long been an important component of a systematic review or meta-analysis, and methods have evolved greatly over time. Early appraisal tools were structured as quality ‘scales’, which combined information on several features into a single score. However, this approach was questioned after it was revealed that the type of quality scale used could significantly influence the interpretation of the meta-analysis results (Jüni et al 1999). That is, risk ratios of trials deemed ‘high quality’ by some scales suggested that the experimental intervention was superior, whereas when trials were deemed ‘high quality’ by other scales, the opposite was the case. The lack of a theoretical framework underlying the concept of ‘quality’ assessed by these scales resulted in tools mixing different concepts such as risk of bias, imprecision, relevance, applicability, ethics, and completeness of reporting. Furthermore, the summary score combining these components is difficult to interpret (Jüni et al 2001).

In 2008, Cochrane released the Cochrane risk-of-bias (RoB) tool, which was slightly revised in 2011 (Higgins et al 2011). The tool was built on the following key principles:

- The tool focused on a single concept: risk of bias. It did not consider other concepts such as the quality of reporting, precision (the extent to which results are free of random errors), or external validity (directness, applicability or generalizability).

- The tool was based on a domain-based (or component) approach, in which different types of bias are considered in turn. Users were asked to assess seven domains: random sequence generation, allocation sequence concealment, blinding of participants and personnel, blinding of outcome assessment, incomplete outcome data, selective outcome reporting, and other sources of bias. There was no scoring system in the tool.

- The domains were selected to characterize mechanisms through which bias may be introduced into a trial, based on a combination of theoretical considerations and empirical evidence.

- The assessment of risk of bias required judgement and should thus be completely transparent. Review authors provided a judgement for each domain, rated as ‘low’, ‘high’ or ‘unclear’ risk of bias, and provided reasons to support their judgement.

This tool has been implemented widely both in Cochrane Reviews and non-Cochrane reviews (Jørgensen et al 2016). However, user testing has raised some concerns related to the modest inter-rater reliability of some domains (Hartling et al 2013), the need to rethink the theoretical background of the ‘selective outcome reporting’ domain (Page and Higgins 2016), the misuse of the ‘other sources of bias’ domain (Jørgensen et al 2016), and the lack of appropriate consideration of the risk-of-bias assessment in the analyses and interpretation of results (Hopewell et al 2013).

To address these concerns, a new version of the Cochrane risk-of-bias tool, RoB 2, has been developed. The tool, described in Chapter 8, includes important innovations in the assessment of risk of bias in randomized trials. The structure of the tool is similar to that of the ROBINS-I tool for non-randomized studies of interventions (described in Chapter 25). Both tools include a fixed set of bias domains, which are intended to cover all issues that might lead to a risk of bias. To help reach risk-of-bias judgements, a series of ‘signalling questions’ are included within each domain. Also, the assessment is typically specific to a particular result. This is because the risk of bias may differ depending on how an outcome is measured and how the data for the outcome are analysed. For example, if two analyses for a single outcome are presented, one adjusted for baseline prognostic factors and the other not, then the risk of bias in the two results may be different. The risk of bias in at least one specific result for each included study should be assessed in all Cochrane Reviews (MECIR Box 7.1.a).

MECIR Box 7.1.a Relevant expectations for conduct of intervention reviews

|

C52: Assessing risk of bias (Mandatory) |

|

|

Assess the risk of bias in at least one specific result for each included study. For randomized trials, the RoB 2 tool should be used, involving judgements and support for those judgements across a series of domains of bias, as described in this Handbook. |

The risk of bias in at least one specific result for every included study must be explicitly considered to determine the extent to which its findings can be believed, noting that risks of bias might vary by result. Recommendations for assessing bias in randomized studies included in Cochrane Reviews are now well established. The RoB 2 tool – as described in this Handbook – must be used for all randomized trials in new reviews. This does not prevent other tools being used. |

7.2 Empirical evidence of bias

Where possible, assessments of risk of bias in a systematic review should be informed by evidence. The following sections summarize some of the key evidence about bias that informs our guidance on risk-of-bias assessments in Cochrane Reviews.

7.2.1 Empirical evidence of bias in randomized trials: meta-epidemiologic studies

Many empirical studies have shown that methodological features of the design, conduct and reporting of studies are associated with biased intervention effect estimates. This evidence is mainly based on meta-epidemiologic studies using a large collection of meta-analyses to investigate the association between a reported methodological characteristic and intervention effect estimates in randomized trials. The first meta-epidemiologic study was published in 1995. It showed exaggerated intervention effect estimates when intervention allocation methods were inadequate or unclear and when trials were not described as double-blinded (Schulz et al 1995). These results were subsequently confirmed in several meta-epidemiologic studies, showing that lack of reporting of adequate random sequence generation, allocation sequence concealment, double blinding and more specifically blinding of outcome assessors tend to yield higher intervention effect estimates on average (Dechartres et al 2016a, Page et al 2016).

Evidence from meta-epidemiologic studies suggests that the influence of methodological characteristics such as lack of blinding and inadequate allocation sequence concealment varies by the type of outcome. For example, the extent of over-estimation is larger when the outcome is subjectively measured (e.g. pain) and therefore likely to be influenced by knowledge of the intervention received, and lower when the outcome is objectively measured (e.g. death) and therefore unlikely to be influenced by knowledge of the intervention received (Wood et al 2008, Savović et al 2012).

7.2.2 Trial characteristics explored in meta-epidemiologic studies that are not considered sources of bias

Researchers have also explored the influence of other trial characteristics that are not typically considered a threat to a direct causal inference for intervention effect estimates. Recent meta-epidemiologic studies have shown that effect estimates were lower in prospectively registered trials compared with trials not registered or registered retrospectively (Dechartres et al 2016b, Odutayo et al 2017). Others have shown an association between sample size and effect estimates, with larger effects observed in smaller trials (Dechartres et al 2013). Studies have also shown a consistent association between intervention effect and single or multiple centre status, with single-centre trials showing larger effect estimates, even after controlling for sample size (Dechartres et al 2011).

In some of these cases, plausible bias mechanisms can be hypothesized. For example, both the number of centres and sample size may be associated with intervention effect estimates because of non-reporting bias (e.g. single-centre studies and small studies may be more likely to be published when they have larger, statistically significant effects than when they have smaller, non-significant effects); or single-centre and small studies may be subject to less stringent controls and checks. However, alternative explanations are possible, such as differences in factors relating to external validity (e.g. participants in small, single-centre trials may be more homogenous than participants in other trials). Because of this, these factors are not directly captured by the risk-of-bias tools recommended by Cochrane. Review authors should record these characteristics systematically for each study included in the systematic review (e.g. in the ‘Characteristics of included studies’ table) where appropriate. For example, trial registration status should be recorded for all randomized trials identified.

7.2.3 Empirical evidence of non-reporting biases

A list of the key types of non-reporting biases is provided in Table 7.2.a. In the sections that follow, we provide some of the evidence that underlies this list.

Table 7.2.a Definitions of some types of non-reporting biases

|

Type of reporting bias |

Definition |

|

Publication bias |

The publication or non-publication of research findings, depending on the nature and direction of the results. |

|

Time-lag bias |

The rapid or delayed publication of research findings, depending on the nature and direction of the results. |

|

Language bias |

The publication of research findings in a particular language, depending on the nature and direction of the results. |

|

Citation bias |

The citation or non-citation of research findings, depending on the nature and direction of the results. |

|

Multiple (duplicate) publication bias |

The multiple or singular publication of research findings, depending on the nature and direction of the results. |

|

Location bias |

The publication of research findings in journals with different ease of access or levels of indexing in standard databases, depending on the nature and direction of results. |

|

Selective (non-) reporting bias |

The selective reporting of some outcomes or analyses, but not others, depending on the nature and direction of the results. |

7.2.3.1 Selective publication of study reports

There is convincing evidence that the publication of a study report is influenced by the nature and direction of its results (Chan et al 2014). Direct empirical evidence of such selective publication (or ‘publication bias’) is obtained from analysing a cohort of studies in which there is a full accounting of what is published and unpublished (Franco et al 2014). Schmucker and colleagues analysed the proportion of published studies in 39 cohorts (including 5112 studies identified from research ethics committees and 12,660 studies identified from trials registers) (Schmucker et al 2014). Only half of the studies were published, and studies with statistically significant results were more likely to be published than those with non-significant results (odds ratio (OR) 2.8; 95% confidence interval (CI) 2.2 to 3.5) (Schmucker et al 2014). Similar findings were observed by Scherer and colleagues, who conducted a systematic review of 425 studies that explored subsequent full publication of research initially presented at biomedical conferences (Scherer et al 2018). Only 37% of the 307,028 abstracts presented at conferences were published later in full (60% for randomized trials), and abstracts with statistically significant results in favour of the experimental intervention (versus results in favour of the comparator intervention) were more likely to be published in full (OR 1.17; 95% CI 1.07 to 1.28) (Scherer et al 2018). By examining a cohort of 164 trials submitted to the FDA for regulatory approval, Rising and colleagues found that trials with favourable results were more likely than those with unfavourable results to be published (OR 4.7; 95% CI 1.33 to 17.1) (Rising et al 2008).

In addition to being more likely than unpublished randomized trials to have statistically significant results, published trials also tend to report larger effect estimates in favour of the experimental intervention than trials disseminated elsewhere (e.g. in conference abstracts, theses, books or government reports) (ratio of odds ratios 0.90; 95% CI 0.82 to 0.98) (Dechartres et al 2018). This bias has been observed in studies in many scientific disciplines, including the medical, biological, physical and social sciences (Polanin et al 2016, Fanelli et al 2017).

7.2.3.2 Other types of selective dissemination of study reports

The length of time between completion of a study and publication of its results can be influenced by the nature and direction of the study results (‘time-lag bias’). Several studies suggest that randomized trials with results that favour the experimental intervention are published in journals about one year earlier on average than trials with unfavourable results (Hopewell et al 2007, Urrutia et al 2016).

Investigators working in a non-English speaking country may publish some of their work in local, non-English language journals, which may not be indexed in the major biomedical databases (‘language bias’). It has long been assumed that investigators are more likely to publish positive studies in English-language journals than in local, non-English language journals (Morrison et al 2012). Contrary to this belief, Dechartres and colleagues identified larger intervention effects in randomized trials published in a language other than English than in English (ratio of odds ratios 0.86; 95% CI 0.78 to 0.95), which the authors hypothesized may be related to the higher risk of bias observed in the non-English language trials (Dechartres et al 2018). Several studies have found that in most cases there were no major differences between summary estimates of meta-analyses restricted to English-language studies compared with meta-analyses including studies in languages other than English (Morrison et al 2012, Dechartres et al 2018).

The number of times a study report is cited appears to be influenced by the nature and direction of its results (‘citation bias’). In a meta-analysis of 21 methodological studies, Duyx and colleagues observed that articles with statistically significant results were cited 1.57 times the rate of articles with non-significant results (rate ratio 1.57; 95% CI 1.34 to 1.83) (Duyx et al 2017). They also found that articles with results in a positive direction (regardless of their statistical significance) were cited at 2.14 times the rate of articles with results in a negative direction (rate ratio 2.14; 95% CI 1.29 to 3.56) (Duyx et al 2017). In an analysis of 33,355 studies across all areas of science, Fanelli and colleagues found that the number of citations received by a study was positively correlated with the magnitude of effects reported (Fanelli et al 2017). If positive studies are more likely to be cited, they may be more likely to be located, and thus more likely to be included in a systematic review.

Investigators may report the results of their study across multiple publications; for example, Blümle and colleagues found that of 807 studies approved by a research ethics committee in Germany from 2000 to 2002, 135 (17%) had more than one corresponding publication (Blümle et al 2014). Evidence suggests that studies with statistically significant results or larger treatment effects are more likely to lead to multiple publications (‘multiple (duplicate) publication bias’) (Easterbrook et al 1991, Tramèr et al 1997), which makes it more likely that they will be located and included in a meta-analysis.

Research suggests that the accessibility or level of indexing of journals is associated with effect estimates in trials (‘location bias’). For example, a study of 61 meta-analyses found that trials published in journals indexed in Embase but not MEDLINE yielded smaller effect estimates than trials indexed in MEDLINE (ratio of odds ratios 0.71; 95% CI 0.56 to 0.90); however, the risk of bias due to not searching Embase may be minor, given the lower prevalence of Embase-unique trials (Sampson et al 2003). Also, Moher and colleagues estimate that 18,000 biomedical research studies are tucked away in ‘predatory’ journals, which actively solicit manuscripts and charge publications fees without providing robust editorial services (such as peer review and archiving or indexing of articles) (Moher et al 2017). The direction of bias associated with non-inclusion of studies published in predatory journals depends on whether they are publishing valid studies with null results or studies whose results are biased towards finding an effect.

7.2.3.3 Selective dissemination of study results

The need to compress a substantial amount of information into a few journal pages, along with a desire for the most noteworthy findings to be published, can lead to omission from publication of results for some outcomes because of the nature and direction of the findings. Particular results may not be reported at all (‘selective non-reporting of results’) or be reported incompletely (‘selective under-reporting of results’, e.g. stating only that “P>0.05” rather than providing summary statistics or an effect estimate and measure of precision) (Kirkham et al 2010). In such instances, the data necessary to include the results in a meta-analysis are unavailable. Excluding such studies from the synthesis ignores the information that no significant difference was found, and biases the synthesis towards finding a difference (Schmid 2016).

Evidence of selective non-reporting and under-reporting of results in randomized trials has been obtained by comparing what was pre-specified in a trial protocol with what is available in the final trial report. In two landmark studies, Chan and colleagues found that results were not reported for at least one benefit outcome in 71% of randomized trials in one cohort (Chan et al 2004a) and 88% in another (Chan et al 2004b). Results were under-reported (e.g. stating only that “P>0.05”) for at least one benefit outcome in 92% of randomized trials in one cohort and 96% in another. Statistically significant results for benefit outcomes were twice as likely as non-significant results to be completely reported (range of odds ratios 2.4 to 2.7) (Chan et al 2004a, Chan et al 2004b). Reviews of studies investigating selective non-reporting and under-reporting of results suggest that it is more common for outcomes defined by trialists as secondary rather than primary (Jones et al 2015, Li et al 2018).

Selective non-reporting and under-reporting of results occurs for both benefit and harm outcomes. Examining the studies included in a sample of 283 Cochrane Reviews, Kirkham and colleagues suspected that 50% of 712 studies with results missing for the primary benefit outcome of the review were missing because of the nature of the results (Kirkham et al 2010). This estimate was slightly higher (63%) in 393 studies with results missing for the primary harm outcome of 322 systematic reviews (Saini et al 2014).

7.3 General procedures for risk-of-bias assessment

7.3.1 Collecting information for assessment of risk of bias

Information for assessing the risk of bias can be found in several sources, including published articles, trials registers, protocols, clinical study reports (i.e. documents prepared by pharmaceutical companies, which provide extensive detail on trial methods and results), and regulatory reviews (see also Chapter 5, Section 5.2).

Published articles are the most frequently used source of information for assessing risk of bias. This source is theoretically very valuable because it has been reviewed by editors and peer reviewers, who ideally will have prompted authors to report their methods transparently. However, the completeness of reporting of published articles is, in general, quite poor, and essential information for assessing risk of bias is frequently missing. For example, across 20,920 randomized trials included in 2001 Cochrane Reviews, the percentage of trials at unclear risk of bias was 49% for random sequence generation, 57% for allocation sequence concealment; 31% for blinding and 25% for incomplete outcome data (Dechartres et al 2017). Nevertheless, more recent trials were less likely to be judged at unclear risk of bias, suggesting that reporting is improving over time (Dechartres et al 2017).

Trials registers can be a useful source of information to obtain results of studies that have not yet been published (Riveros et al 2013). However, registers typically report only limited information about methods used in the trial to inform an assessment of risk of bias (Wieseler et al 2012). Protocols, which outline the objectives, design, methodology, statistical consideration and procedural aspects of a clinical study, may provide more detailed information on the methods used than that provided in the results report of a study. They are increasingly being published or made available by journals who publish the final report of a study. Protocols are also available in some trials registers, particularly ClinicalTrials.gov (Zarin et al 2016), on websites dedicated to data sharing such as ClinicalStudyDataRequest.com, or from drug regulatory authorities such as the European Medicines Agency. Clinical study reports are another highly useful source of information (Wieseler et al 2012, Jefferson et al 2014).

It may be necessary to contact study investigators to request access to the trial protocol, to clarify incompletely reported information or understand discrepant information available in different sources. To reduce the risk that study authors provide overly positive answers to questions about study design and conduct, we suggest review authors use open-ended questions. For example, to obtain information about the randomization process, review authors might consider asking: ‘What process did you use to assign each participant to an intervention?’ To obtain information about blinding of participants, it might be useful to request something like, ‘Please describe any measures used to ensure that trial participants were unaware of the intervention to which they were assigned’. More focused questions can then be asked to clarify remaining uncertainties.

7.3.2 Performing assessments of risk of bias

Risk-of-bias assessments in Cochrane Reviews should be performed independently by at least two people (MECIR Box 7.3.a). Doing so can minimize errors in assessments and ensure that the judgement is not influenced by a single person’s preconceptions. Review authors should also define in advance the process for resolving disagreements. For example, both assessors may attempt to resolve disagreements via discussion, and if that fails, call on another author to adjudicate the final judgement. Review authors assessing risk of bias should have either content or methodological expertise (or both), and an adequate understanding of the relevant methodological issues addressed by the risk-of-bias tool. There is some evidence that intensive, standardized training may significantly improve the reliability of risk-of-bias assessments (da Costa et al 2017). To improve reliability of assessments, a review team could consider piloting the risk-of-bias tool on a sample of articles. This may help ensure that criteria are applied consistently and that consensus can be reached. Three to six papers should provide a suitable sample for this. We do not recommend the use of statistical measures of agreement (such as kappa statistics) to describe the extent to which assessments by multiple authors were the same. It is more important that reasons for any disagreement are explored and resolved.

MECIR Box 7.3.a Relevant expectations for conduct of intervention reviews

|

C53: Assessing risk of bias in duplicate (Mandatory) |

|

|

Use (at least) two people working independently to apply the risk-of-bias tool to each result in each included study, and define in advance the process for resolving disagreements. |

Duplicating the risk-of-bias assessment reduces both the risk of making mistakes and the possibility that assessments are influenced by a single person’s biases. |

The process for reaching risk-of-bias judgements should be transparent. In other words, readers should be able to discern why a particular result was rated at low risk of bias and why another was rated at high risk of bias. This can be achieved by review authors providing information in risk-of-bias tables to justify the judgement made. Such information may include direct quotes from study reports that articulate which methods were used, and an explanation for why such a method is flawed. Cochrane Review authors are expected to record the source of information (including the precise location within a document) that informed each risk-of-bias judgement (MECIR Box 7.3.b).

MECIR Box 7.3.b Relevant expectations for conduct of intervention reviews

|

C54: Supporting judgements of risk of bias (Mandatory) |

|

|

Justify judgements of risk of bias (high, low and some concerns) and provide this information in the risk-of-bias tables (as ‘Support for judgement’). |

Providing support for the judgement makes the process transparent. |

|

C55: Providing sources of information for risk-of-bias assessments (Mandatory) |

|

|

Collect the source of information for each risk-of-bias judgement (e.g. quotation, summary of information from a trial report, correspondence with investigator, etc). Where judgements are based on assumptions made on the basis of information provided outside publicly available documents, this should be stated. |

Readers, editors and referees should have the opportunity to see for themselves from where supports for judgements have been obtained. |

Many results are often available in trial reports, so review authors should think carefully about which results to assess for risk of bias. We suggest that review authors assess risk of bias in results for outcomes that are included in the ‘Summary of findings’ table (MECIR Box 7.3.c). Such tables typically include seven or fewer patient-important outcomes (for more details on constructing a ‘Summary of findings’ table, see Chapter 14).

MECIR Box 7.3.c Relevant expectations for conduct of intervention reviews

|

C56: Ensuring results of outcomes included in ‘Summary of findings’ tables are assessed for risk of bias (Highly desirable) |

|

|

Ensure that assessments of risk of bias cover the outcomes included in the ‘Summary of findings’ table. |

It may not be feasible to assess the risk of bias in every single result available across the included studies, particularly if a large number of studies and results are available. Review author should strive to assess risk of bias in the results of outcomes that are most important to patients. Such outcomes will typically be included in ‘Summary of findings’ tables, which present the findings of seven or fewer patient-important outcomes. |

Novel methods for assessing risk of bias are emerging, including machine learning systems designed to semi-automate risk-of-bias assessment (Marshall et al 2016, Millard et al 2016). These methods involve using a sample of previous risk-of-bias assessments to train machine learning models to predict risk of bias from PDFs of study reports, and extract supporting text for the judgements. Some of these approaches showed good performance for identifying relevant sentences to identify information pertinent to risk of bias from the full-text content of research articles describing clinical trials. A study showed that about one-third of articles could be assessed by just one reviewer if such a tool is used instead of the two required reviewers (Millard et al 2016). However, reliability in reaching judgements about risk of bias compared with human reviewers was slight to moderate depending on the domain assessed (Gates et al 2018).

7.4 Presentation of assessment of risk of bias

Risk-of-bias assessments may be presented in a Cochrane Review in various ways. A full risk-of-bias table includes responses to each signalling question within each domain (see Chapter 8, Section 8.2) and risk-of-bias judgements, along with text to support each judgement. Such full tables are lengthy and are unlikely to be of great interest to readers, so should generally not be included in the main body of the review. It is nevertheless good practice to make these full tables available for reference.

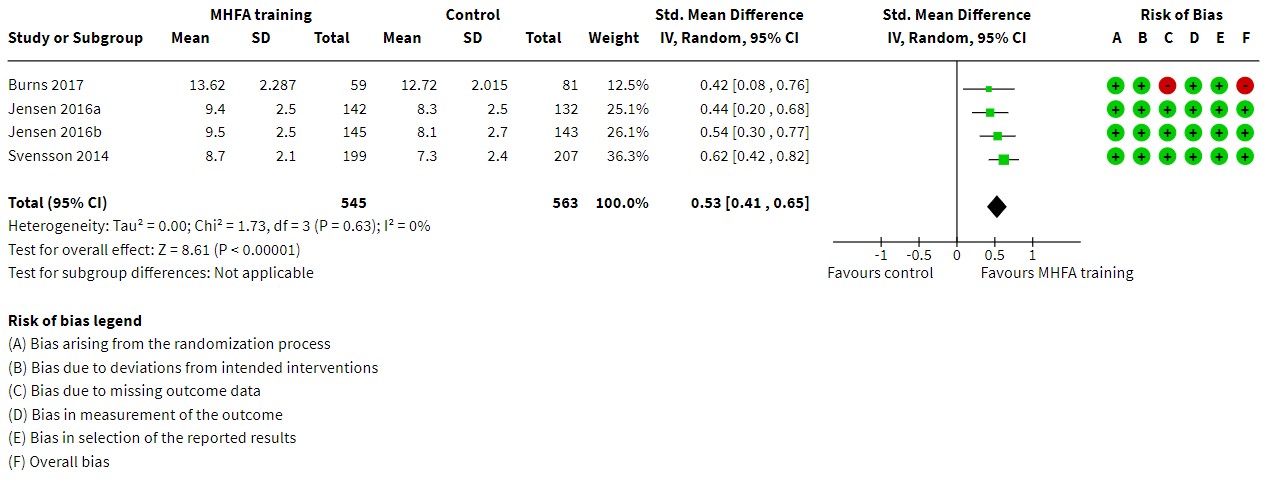

We recommend the use of forest plots that present risk-of-bias judgements alongside the results of each study included in a meta-analysis (see Figure 7.4.a). This will give a visual impression of the relative contributions of the studies at different levels of risk of bias, especially when considered in combination with the weight given to each study. This may assist authors in reaching overall conclusions about the risk of bias of the synthesized result, as discussed in Section 7.6. Optionally, forest plots or other tables or graphs can be ordered (stratified) by judgements on each risk-of-bias domain or by the overall risk-of-bias judgement for each result.

Review authors may wish to generate bar graphs illustrating the relative contributions of studies with each of risk-of-bias judgement (low risk of bias, some concerns, and high risk of bias). When dividing up a bar into three regions for this purpose, it is preferable to determine the regions according to statistical information (e.g. precision, or weight in a meta-analysis) arising from studies in each category, rather than according to the number of studies in each category.

Figure 7.4.a Forest plot displaying RoB 2 risk-of-bias judgements for each randomized trial included in a meta-analysis of mental health first aid (MHFA) knowledge scores. Adapted from Morgan et al (2018).

7.5 Summary assessments of risk of bias

Review authors should make explicit summary judgements about the risk of bias for important results both within studies and across studies (see MECIR Box 7.5.a). The tools currently recommended by Cochrane for assessing risk of bias within included studies (RoB 2 and ROBINS-I) produce an overall judgement of risk of bias for the result being assessed. These overall judgements are derived from assessments of individual bias domains as described, for example, in Chapter 8, Section 8.2.

To summarize risk of bias across study results in a synthesis, review authors should follow guidance for assessing certainty in the body of evidence (e.g. using GRADE), as described in Chapter 14, Section 14.2.2. When a meta-analysis is dominated by study results at high risk of bias, the certainty of the body of evidence may be rated as being lower than if such studies were excluded from the meta-analysis. Section 7.6 discusses some possible courses of action that may be preferable to retaining such studies in the synthesis.

MECIR Box 7.5.a Relevant expectations for conduct of intervention reviews

|

C57: Summarizing risk-of-bias assessments (Highly desirable) |

|

|

Summarize the risk of bias for each key outcome for each study. |

|

7.6 Incorporating assessment of risk of bias into analyses

7.6.1 Introduction

When performing and presenting meta-analyses, review authors should address risk of bias in the results of included studies (MECIR Box 7.6.a). It is not appropriate to present analyses and interpretations while ignoring flaws identified during the assessment of risk of bias. In this section we present suitable strategies for addressing risk of bias in results from studies included in a meta-analysis, either in order to understand the impact of bias or to determine a suitable estimate of intervention effect (Section 7.6.2). For the latter, decisions often involve a trade-off between bias and precision. A meta-analysis that includes all eligible studies may produce a result with high precision (narrow confidence interval) but be seriously biased because of flaws in the conduct of some of the studies. However, including only the studies at low risk of bias in all domains assessed may produce a result that is unbiased but imprecise (if there are only a few studies at low risk of bias).

MECIR Box 7.6.a Relevant expectations for conduct of intervention reviews

|

C58: Addressing risk of bias in the synthesis (Highly desirable) |

|

|

Address risk of bias in the synthesis (whether quantitative or non-quantitative). For example, present analyses stratified according to summary risk of bias, or restricted to studies at low risk of bias. |

|

|

C59: Incorporating assessments of risk of bias (Mandatory) |

|

|

If randomized trials have been assessed using one or more tools in addition to the RoB 2 tool, use the RoB 2 tool as the primary assessment of bias for interpreting results, choosing the primary analysis, and drawing conclusions. |

|

7.6.2 Including risk-of-bias assessments in analyses

Broadly speaking, studies at high risk of bias should be given reduced weight in meta-analyses compared with studies at low risk of bias. However, methodological approaches for weighting studies according to their risk of bias are not sufficiently well developed that they can currently be recommended for use in Cochrane Reviews.

When risks of bias vary across studies in a meta-analysis, four broad strategies are available to incorporate assessments into the analysis. The choice of strategy will influence which result to present as the main finding for a particular outcome (e.g. in the Abstract). The intended strategy should be described in the protocol for the review.

(1) Primary analysis restricted to studies at low risk of bias

The first approach involves restricting the primary analysis to studies judged to be at low risk of bias overall. Review authors who restrict their primary analysis in this way are encouraged to perform sensitivity analyses to show how conclusions might be affected if studies at a high risk of bias were included.

(2) Present multiple (stratified) analyses

Stratifying according to the overall risk of bias will produce multiple estimates of the intervention effect: for example, one based on all studies, one based on studies at low risk of bias, and one based on studies at high risk of bias. Two or more such estimates might be considered with equal prominence (e.g. the first and second of these). However, presenting the results in this way may be confusing for readers. In particular, people who need to make a decision usually require a single estimate of effect. Furthermore, ‘Summary of findings’ tables typically present only a single result for each outcome. On the other hand, a stratified forest plot presents all the information transparently. Though we would generally recommend stratification is done on the basis of overall risk of bias, review authors may choose to conduct subgroup analyses based on specific bias domains (e.g. risk of bias arising from the randomization process).

Formal comparisons of intervention effects according to risk of bias can be done with a test for differences across subgroups (e.g. comparing studies at high risk of bias with studies at low risk of bias), or by using meta-regression (for more details see Chapter 10, Section 10.11.4). However, review authors should be cautious in planning and carrying out such analyses, because an individual review may not have enough studies in each category of risk of bias to identify meaningful differences. Lack of a statistically significant difference between studies at high and low risk of bias should not be interpreted as absence of bias, because these analyses typically have low power.

The choice between strategies (1) and (2) should be based to large extent on the balance between the potential for bias and the loss of precision when studies at high or unclear risk of bias are excluded.

(3) Present all studies and provide a narrative discussion of risk of bias

The simplest approach to incorporating risk-of-bias assessments in results is to present an estimated intervention effect based on all available studies, together with a description of the risk of bias in individual domains, or a description of the summary risk of bias, across studies. This is the only feasible option when all studies are at the same risk of bias. However, when studies have different risks of bias, we discourage such an approach for two reasons. First, detailed descriptions of risk of bias in the Results section, together with a cautious interpretation in the Discussion section, will often be lost in the Authors’ conclusions, Abstract and ‘Summary of findings’ table, so that the final interpretation ignores the risk of bias and decisions continue to be based, at least in part, on compromised evidence. Second, such an analysis fails to down-weight studies at high risk of bias and so will lead to an overall intervention effect that is too precise, as well as being potentially biased.

When the primary analysis is based on all studies, summary assessments of risk of bias should be incorporated into explicit measures of the certainty of evidence for each important outcome, for example, by using the GRADE system (Guyatt et al 2008). This incorporation can help to ensure that judgements about the risk of bias, as well as other factors affecting the quality of evidence, such as imprecision, heterogeneity and publication bias, are considered appropriately when interpreting the results of the review (see Chapter 14 and Chapter 15).

(4) Adjust effect estimates for bias

A final, more sophisticated, option is to adjust the result from each study in an attempt to remove the bias. Adjustments are usually undertaken within a Bayesian framework, with assumptions about the size of the bias and its uncertainty being expressed through prior distributions (see Chapter 10, Section 10.13). Prior distributions may be based on expert opinion or on meta-epidemiological findings (Turner et al 2009, Welton et al 2009). The approach is increasingly used in decision making, where adjustments can additionally be made for applicability of the evidence to the decision at hand. However, we do not encourage use of bias adjustments in the context of a Cochrane Review because the assumptions required are strong, limited methodological expertise is available, and it is not possible to account for issues of applicability due to the diverse intended audiences for Cochrane Reviews. The approach might be entertained as a sensitivity analysis in some situations.

7.7 Considering risk of bias due to missing results

The 2011 Cochrane risk-of-bias tool for randomized trials encouraged a study-level judgement about whether there has been selective reporting, in general, of the trial results. As noted in Section 7.2.3.3, selective reporting can arise in several ways: (1) selective non-reporting of results, where results for some of the analysed outcomes are selectively omitted from a published report; (2) selective under-reporting of data, where results for some outcomes are selectively reported with inadequate detail for the data to be included in a meta-analysis; and (3) bias in selection of the reported result, where a result has been selected for reporting by the study authors, on the basis of the results, from multiple measurements or analyses that have been generated for the outcome domain (Page and Higgins 2016).

The RoB 2 and ROBINS-I tools focus solely on risk of bias as it pertains to a specific trial result. With respect to selective reporting, RoB 2 and ROBINS-I examine whether a specific result from the trial is likely to have been selected from multiple possible results on the basis of the findings (scenario 3 above). Guidance on assessing the risk of bias in selection of the reported result is available in Chapter 8 (for randomized trials) and Chapter 25 (for non-randomized studies of interventions).

If there is no result (i.e. it has been omitted selectively from the report or under-reported), then a risk-of-bias assessment at the level of the study result is not applicable. Selective non-reporting of results and selective under-reporting of data are therefore not covered by the RoB 2 and ROBINS-I tools. Instead, selective non-reporting of results and under-reporting of data should be assessed at the level of the synthesis across studies. Both practices lead to a situation similar to that when an entire study report is unavailable because of the nature of the results (also known as publication bias). Regardless of whether an entire study report or only a particular result of a study is unavailable, the same consequence can arise: bias in a synthesis because available results differ systematically from missing results (Page et al 2018). Chapter 13 provides detailed guidance on assessing risk of bias due to missing results in a systematic review.

7.8 Considering source of funding and conflict of interest of authors of included studies

Readers of a trial report often need to reflect on whether conflicts of interest have influenced the design, conduct, analysis and reporting of a trial. It is therefore now common for scientific journals to require authors of trial reports to provide a declaration of conflicts of interest (sometimes called ‘competing’ or ‘declarations of’ interest), to report funding sources and to describe any funder’s role in the trial.

In this section, we characterize conflicts of interest in randomized trials and discuss how conflicts of interest may impact on trial design and effect estimates. We also suggest how review authors can collect, process and use information on conflicts of interest in the assessment of:

- directness of studies to the review’s research question;

- heterogeneity in results due to differences in the designs of eligible studies;

- risk of bias in results of included studies;

- risk of bias in a synthesis due to missing results.

At the time of writing, a formal Tool for Addressing Conflicts of Interest in Trials (TACIT) is being developed under the auspices of the Cochrane Bias Methods Group. The TACIT development process has informed the content of this section, and we encourage readers to check http://tacit.one for more detailed guidance that will become available.

7.8.1 Characteristics of conflicts of interest

The Institute of Medicine defined conflicts of interest as “a set of circumstances that creates a risk that professional judgment or actions regarding a primary interest will be unduly influenced by a secondary interest” (Lo et al 2009). In a clinical trial, the primary interest is to provide patients, clinicians and health policy makers with an unbiased and clinically relevant estimate of an intervention effect. Secondary interest may be both financial and non-financial.

Financial conflicts of interest involve both financial interests related to a specific trial (for example, a company funding a trial of a drug produced by the same company) and financial interests related to the authors of a trial report (for example, authors’ ownership of stocks or employment by a drug company).

For drug and device companies and other manufacturers, the financial difference between a negative and positive pivotal trial can be considerable. For example, the mean stock price of the companies funding 23 positive pivotal oncology trials increased by 14% after disclosure of the results (Rothenstein et al 2011). Industry funding is common, especially in drug trials. In a study of 200 trial publications from 2015, 68 (38%) of 178 trials with funding declarations were industry funded (Hakoum et al 2017). Also, in a cohort of oncology drug trials, industry funded 44% of trials and authors declared conflicts of interest in 69% of trials (Riechelmann et al 2007).

The degree of funding, and the type of the involvement of industry funders, may differ across trials. In some situations, involvement includes only the provision of free study medication for a trial that has otherwise been planned and conducted independently, and funded largely, by public means. In other situations, a company fully funds and controls a trial. In rarer cases, head-to-head trials comparing two drugs may be funded by the two different companies producing the drugs.

A Cochrane Methodology Review analysed 75 studies of the association between industry funding and trial results (Lundh et al 2017). The authors concluded that trials funded by a drug or device company were more likely to have positive conclusions and statistically significant results, and that this association could not be explained by differences in risk of bias between industry and non-industry funded trials. However, industry and non-industry trials may differ in ways that may confound the association; for example due to choice of patient population, comparator interventions or outcomes. Only one of the included studies used a meta-epidemiological design and found no clear association between industry funding and the magnitude of intervention effects (Als-Nielsen et al 2003). Similar to the association with industry funding, other studies have reported that results of trials conducted by authors with a financial conflict of interest were more likely to be positive (Ahn et al 2017).

Conflicts of interest may also be non-financial (Viswanathan et al 2014). Characterizations of non-financial conflicts of interest differ somewhat, but typically distinguish between conflicts related mainly to an individual (e.g. adherence to a theory or ideology), relationships to other individuals (e.g. loyalty to friends, family members or close colleagues), or relationship to groups (e.g. work place or professional groups). In medicine, non-financial conflicts of interest have received less attention than financial conflicts of interest. In addition, financial and non-financial conflicts are often intertwined; for example, non-financial conflicts related to institutional association can be considered as indirect financial conflicts linked to employment. Definitions of what should be characterized as a ‘non-financial’ conflict of interest, and, in particular, whether personal beliefs, experiences or intellectual commitments should be considered conflicts of interest, have been debated (Bero and Grundy 2016).

It is useful to differentiate between non-financial conflicts of interest of a trial researcher and the basic interests and hopes involved in doing good trial research. Most researchers conducting a trial will have an interest in the scientific problem addressed, a well-articulated theoretical position, anticipation for a specific trial result, and hopes for publication in a respectable journal. This is not a conflict of interest but a basic condition for doing health research. However, individual researchers may lose sight of the primacy of the methodological neutrality at the heart of a scientific enquiry, and become unduly occupied with the secondary interest of how trial results may affect academic appearance or chances of future funding. Extreme examples are the publication of fabricated trial data or trials, some of which have had an impact on systematic reviews (Marret et al 2009).

Few empirical studies of non-financial conflicts of interest in randomized trials have been published, and to our knowledge there are none that assess the impact of non-financial conflicts of interest on trial results and conclusions. However, non-financial conflicts of interests have been investigated in other types of clinical research; for example, guideline authors’ specialty appears to have influenced their voting behaviour while developing guidelines for mammography screening (Norris et al 2012).

7.8.2 Conflict of interest and trial design

Core decisions on designing a trial involve defining the type of participants to be included, the type of experimental intervention, the type of comparator, the outcomes (and timing of outcome assessments) and the choice of analysis. Such decisions will often reflect a compromise between what is clinically and scientifically ideal and what is practically possible. However, when investigators have important conflicts of interest, a trial may be designed in a way that increases its chances of detecting a positive trial result, at the expense of clinical applicability. For example, narrow eligibility criteria may exclude older and frail patients, thus reducing the possibility of detecting clinically relevant harms. Alternatively, trial designers may choose placebo as a comparator despite an effective intervention being in regular use, or they may focus on short-term surrogate outcomes rather than clinically relevant long-term outcomes (Estellat and Ravaud 2012, Wieland et al 2017).

Trial design choices may be more subtle. For example, a trial may be designed to favour an experimental drug by using an inferior comparator drug when better alternatives exist (Safer 2002) or by using a low dose of the comparator drug when the focus is efficacy and a high dose of the comparator drug when the focus is harms (Mann and Djulbegovic 2013). In a typical Cochrane Review with fairly broad eligibility criteria aiming to identify and summarize all relevant trials, it is pertinent to consider the degree to which a given trial result directly relates to the question posed by the review. If all or most identified trials have narrow eligibility criteria and short-term outcomes, a review question focusing on broad patient categories and long-term effects can only be answered indirectly by the included studies. This has implications for the assessment of the certainty of the evidence provided by the review, which is addressed through the concept of indirectness in the GRADE framework (see Chapter 14, Section 14.2).

If results in a meta-analysis display heterogeneity, then differences in design choices that are driven by conflicts of interest may be one reason for this. Thus, conflicts of interest may also affect reflections on the certainty of the evidence through the GRADE concept of inconsistency.

7.8.3 Conflicts of interest and risk of bias in a trial’s effect estimate

Authors of Cochrane Reviews have sometimes included conflicts of interest as an ‘other source of bias’ while using the previous versions of the risk-of-bias tool (Jørgensen et al 2016). Consistent with previous versions of the Handbook, we discourage the inclusion of conflicts of interest directly in the risk-of-bias assessment. Adding conflicts of interest to the bias tool is inconsistent with the conceptual structure of the tool, which is built on mechanistically defined bias domains. Also, restricting consideration of the potential impact of conflicts of interest to a question of risk of bias in an individual trial result overlooks other important aspects, such as the design of the trial (see Section 7.8.2) and potential bias in a meta-analysis due to missing results (see Section 7.8.4).

Conflicts of interest may lead to bias in effect estimates from a trial through several mechanisms. For example, if those recruiting participants into a trial have important conflicts of interest and the allocation sequence is not concealed, then they may be more likely to subvert the allocation process to produce intervention groups that are systematically unbalanced in favour of their preferred intervention. Similarly, investigators with important conflicts of interests may decide to exclude from the analysis some patients who did not respond as anticipated to the experimental intervention, resulting in bias due to missing outcome data. Furthermore, selective reporting of a favourable result may be strongly associated with conflicts of interest (McGauran et al 2010), due to either selective reporting of particular outcome measurements or selective reporting of particular analyses (Eyding et al 2010, Vedula et al 2013). One study found that use of modified-intention-to-treat analysis and post-randomization exclusions occurred more often in trials with industry funding or author conflicts of interest (Montedori et al 2011). Accessing the trial protocol and statistical analysis plan to determine which outcomes and analyses were pre-specified is therefore especially important for a trial with relevant conflicts of interest.

Review authors should explain how consideration of conflicts of interest informed their risk-of-bias judgements. For example, when information on the analysis plans is lacking, review authors may judge the risk of bias in selection of the reported result to be high if the study investigators had important financial conflicts of interest. Conversely, if trial investigators have clearly used methods that are likely to minimize bias, review authors should not judge the risk of bias for each domain higher just because the investigators happen to have conflicts of interest. In addition, as an optional component in the revised risk-of-bias tool, review authors may reflect on the direction of bias (e.g. bias in favour of the experimental intervention). Information on conflicts of interest may inform the assessment of direction of bias.

7.8.4 Conflicts of interest and risk of bias in a synthesis of trial results

Conflicts of interest may also affect the decision not to report trial results. Conflicts of interest are probably one of several important reasons for decisions not to publish trials with negative findings, and not to publish unfavourable results (Sterne 2013). When relevant trial results are systematically missing from a meta-analysis because of the nature of the findings, the synthesis is at risk of bias due to missing results. Chapter 13 provides detailed guidance on assessing risk of bias due to missing results in a systematic review.

7.8.5 Practical approach to identifying and extracting information on conflicts of interest

When assessing conflicts of interest in a trial, review authors will, to a large degree, rely on declared conflicts. Source of funding may be reported in a trial publication, and conflicts of interest may be reported in an accompanying declaration, for example the International Committee of Medical Journal Editors (ICMJE) declaration. In a random sample of 1002 articles published in 2016, authors of 229 (23%) declared having a conflict of interest (Grundy et al 2018). Unfortunately, undeclared conflicts of interest and sources of funding are fairly common (Rasmussen et al 2015, Patel et al 2018).

It is always prudent to examine closely the conflicts of interest of lead and corresponding authors, based on information reported in the trial publication and the author declaration (for example, the ICMJE declaration form). Review authors should also consider examining conflicts of interest of trial co-authors and any commercial collaborators with conflicts of interest; for example, a commercial contract research organization hired by the funder to collect and analyse trial data or the involvement of a medical writing agency. Due to the high prevalence of undisclosed conflicts of interest, review authors should consider expanding their search for conflicts of interest data from other sources (e.g. disclosure in other publications by the authors, the trial protocol, the clinical study report, and public conflicts of interest registries (e.g. Open Payments database)).

We suggest that review authors balance the workload involved with the expected gain, and search additional sources of information on conflicts of interest when there is reason to suspect important conflicts of interest. As a rule of thumb, in trials with unclear funding source and no declaration of conflicts of interest from lead or corresponding authors, we suggest review authors search the Open Payments database, ClinicalTrials.gov, and conflicts of interest declarations in a few previous publications by the study authors. In trials with no commercial funding (including no company employee co-authors) and no declared conflicts of interest for lead or corresponding authors, we suggest review authors not bother to consult additional sources. Also, for trials where lead or corresponding authors have clear conflicts of interest, little additional information may be gained from checking conflicts of interest of co-authors.

Gaining access to relevant information on financial conflicts of interest is possible for a considerable number of trials, despite inherent problems of undeclared conflicts. We expect that the proportion of trials with relevant declarations will increase further.

Access to relevant information on non-financial conflicts of interest is more difficult to gain. Declaration of non-financial conflicts of interest is requested by approximately 50% of journals (Shawwa et al 2016). The term was deleted from ICMJE’s declaration in 2010 in exchange for a broad category of “Other relationships or activities” (Drazen et al 2010). Therefore, non-financial conflicts of interests are seldom self-declared, although if available, such information should be considered.

Non-financial conflicts of interest are difficult to address due to lack of relevant empirical studies on their impact on study results, lack of relevant thresholds for importance, and lack of declaration in many previous trials. However, as a rule of thumb, we suggest that review authors assume trial authors have no non-financial conflicts of interest unless there are clear suggestions of the opposite. Examples of such clues could be a considerable spin in trial publications (Boutron et al 2010), an institutional relationship pertinent to the intervention tested, or external evidence of a fixated ideological or theoretical position.

7.8.6 Judgement of notable concern about conflict of interest

Review authors should describe funding information and conflicts of interest of authors for all studies in the ‘Characteristics of included studies’ table (MECIR Box 7.8.a). Also, review authors may want to explore (e.g. in a subgroup analysis) whether trials with conflicts of interest have different intervention effect estimates, or more variable effect estimates, than trials without conflicts of interest. In both cases, review authors need to aim for a relevant threshold for when any conflict of interest is deemed important. If put too low, there is a risk that trivial conflicts of interest will cloud important ones; if set too high, there is the risk that important conflicts of interest are downplayed or ignored.

This judgement should take into account both the degree of conflicts of interest of study authors and also the extent of their involvement in the study. We pragmatically suggest review authors aim for a judgement about whether or not there is reason for ‘notable concern’ about conflicts of interest. This information could be displayed in a table with three columns:

- trial identifier;

- judgement (e.g. ‘notable concern about conflict of interest’ versus ‘no notable concern about conflict of interest’); and

- rationale for judgement, potentially subdivided according to who had conflicts of interest (e.g. lead or corresponding authors, other authors) and stage(s) of the trial to which they contributed (design, conduct, analysis, reporting).

A judgement of ‘notable concern about conflict of interest’ should be based on reflected assessment of identified conflicts of interest. A hypothetical possibility for undeclared conflicts of interest is, as a rule of thumb, not considered sufficient reason for ‘notable concern’. By ‘notable concern’ we imply important conflicts of interest expected to have a potential impact on study design, risk of bias in study results or risk of bias in a synthesis due to missing results. For example, financial conflicts of interest are important in a trial initiated, designed, analysed and reported by drug or device company employees. Conversely, financial conflicts of interest are less important in a trial initiated, designed, analysed and reported by academics adhering to the arm’s length principle when acquiring free trial medication from a drug company, and where lead authors have no conflicts of interest. Similarly, non-financial conflicts of interest may be important in a trial of a highly controversial and ideologically loaded question such as the adverse effect of male circumcision. Non-financial conflicts of interest are less concerning in a trial comparing two treatments in general use with no connotation to highly controversial scientific theories, ideology or professional groups. Mixing trivial conflicts of interest with important ones may mask the latter and will expand review author workload considerably.

MECIR Box 7.8.a Relevant expectations for conduct of intervention reviews

|

C60: Addressing conflicts of interest in included trials (Highly desirable) |

|

|

Address conflict of interests in included trials, and reflect on possible impact on: (a) differences in study design; (b) risk of bias in trial result, and (c) risk of bias in synthesis result.

|

|

7.9 Chapter information

Authors: Isabelle Boutron, Matthew J Page, Julian PT Higgins, Douglas G Altman, Andreas Lundh, Asbjørn Hróbjartsson

Acknowledgements: We thank Gerd Antes, Peter Gøtzsche, Peter Jüni, Steff Lewis, David Moher, Andrew Oxman, Ken Schulz, Jonathan Sterne and Simon Thompson for their contributions to previous versions of this chapter.

7.10 References

Ahn R, Woodbridge A, Abraham A, Saba S, Korenstein D, Madden E, Boscardin WJ, Keyhani S. Financial ties of principal investigators and randomized controlled trial outcomes: cross sectional study. BMJ 2017; 356: i6770.

Als-Nielsen B, Chen W, Gluud C, Kjaergard LL. Association of funding and conclusions in randomized drug trials: a reflection of treatment effect or adverse events? JAMA 2003; 290: 921-928.

Bero LA, Grundy Q. Why Having a (Nonfinancial) Interest Is Not a Conflict of Interest. PLoS Biology 2016; 14: e2001221.

Blümle A, Meerpohl JJ, Schumacher M, von Elm E. Fate of clinical research studies after ethical approval--follow-up of study protocols until publication. PloS One 2014; 9: e87184.

Boutron I, Dutton S, Ravaud P, Altman DG. Reporting and interpretation of randomized controlled trials with statistically nonsignificant results for primary outcomes. JAMA 2010; 303: 2058-2064.

Chan A-W, Song F, Vickers A, Jefferson T, Dickersin K, Gøtzsche PC, Krumholz HM, Ghersi D, van der Worp HB. Increasing value and reducing waste: addressing inaccessible research. The Lancet 2014; 383: 257-266.

Chan AW, Hróbjartsson A, Haahr MT, Gøtzsche PC, Altman DG. Empirical evidence for selective reporting of outcomes in randomized trials: comparison of protocols to published articles. JAMA 2004a; 291: 2457-2465.

Chan AW, Krleža-Jeric K, Schmid I, Altman DG. Outcome reporting bias in randomized trials funded by the Canadian Institutes of Health Research. Canadian Medical Association Journal 2004b; 171: 735-740.

da Costa BR, Beckett B, Diaz A, Resta NM, Johnston BC, Egger M, Jüni P, Armijo-Olivo S. Effect of standardized training on the reliability of the Cochrane risk of bias assessment tool: a prospective study. Systematic Reviews 2017; 6: 44.

Dechartres A, Boutron I, Trinquart L, Charles P, Ravaud P. Single-center trials show larger treatment effects than multicenter trials: evidence from a meta-epidemiologic study. Annals of Internal Medicine 2011; 155: 39-51.

Dechartres A, Trinquart L, Boutron I, Ravaud P. Influence of trial sample size on treatment effect estimates: meta-epidemiological study. BMJ 2013; 346: f2304.

Dechartres A, Trinquart L, Faber T, Ravaud P. Empirical evaluation of which trial characteristics are associated with treatment effect estimates. Journal of Clinical Epidemiology 2016a; 77: 24-37.

Dechartres A, Ravaud P, Atal I, Riveros C, Boutron I. Association between trial registration and treatment effect estimates: a meta-epidemiological study. BMC Medicine 2016b; 14: 100.

Dechartres A, Trinquart L, Atal I, Moher D, Dickersin K, Boutron I, Perrodeau E, Altman DG, Ravaud P. Evolution of poor reporting and inadequate methods over time in 20 920 randomised controlled trials included in Cochrane reviews: research on research study. BMJ 2017; 357: j2490.

Dechartres A, Atal I, Riveros C, Meerpohl J, Ravaud P. Association between publication characteristics and treatment effect estimates: A meta-epidemiologic study. Annals of Internal Medicine 2018.

Drazen JM, de Leeuw PW, Laine C, Mulrow C, DeAngelis CD, Frizelle FA, Godlee F, Haug C, Hébert PC, Horton R, Kotzin S, Marusic A, Reyes H, Rosenberg J, Sahni P, Van der Weyden MB, Zhaori G. Towards more uniform conflict disclosures: the updated ICMJE conflict of interest reporting form. BMJ 2010; 340: c3239.

Duyx B, Urlings MJE, Swaen GMH, Bouter LM, Zeegers MP. Scientific citations favor positive results: a systematic review and meta-analysis. Journal of Clinical Epidemiology 2017; 88: 92-101.

Easterbrook PJ, Berlin JA, Gopalan R, Matthews DR. Publication bias in clinical research. Lancet 1991; 337: 867-872.

Estellat C, Ravaud P. Lack of head-to-head trials and fair control arms: randomized controlled trials of biologic treatment for rheumatoid arthritis. Archives of Internal Medicine 2012; 172: 237-244.

Eyding D, Lelgemann M, Grouven U, Harter M, Kromp M, Kaiser T, Kerekes MF, Gerken M, Wieseler B. Reboxetine for acute treatment of major depression: systematic review and meta-analysis of published and unpublished placebo and selective serotonin reuptake inhibitor controlled trials. BMJ 2010; 341: c4737.

Fanelli D, Costas R, Ioannidis JPA. Meta-assessment of bias in science. Proceedings of the National Academy of Sciences of the United States of America 2017; 114: 3714-3719.

Franco A, Malhotra N, Simonovits G. Social science. Publication bias in the social sciences: unlocking the file drawer. Science 2014; 345: 1502-1505.

Gates A, Vandermeer B, Hartling L. Technology-assisted risk of bias assessment in systematic reviews: a prospective cross-sectional evaluation of the RobotReviewer machine learning tool. Journal of Clinical Epidemiology 2018; 96: 54-62.

Grundy Q, Dunn AG, Bourgeois FT, Coiera E, Bero L. Prevalence of Disclosed Conflicts of Interest in Biomedical Research and Associations With Journal Impact Factors and Altmetric Scores. JAMA 2018; 319: 408-409.

Guyatt GH, Oxman AD, Vist GE, Kunz R, Falck-Ytter Y, Alonso-Coello P, Schünemann HJ. GRADE: an emerging consensus on rating quality of evidence and strength of recommendations. BMJ 2008; 336: 924-926.

Hakoum MB, Jouni N, Abou-Jaoude EA, Hasbani DJ, Abou-Jaoude EA, Lopes LC, Khaldieh M, Hammoud MZ, Al-Gibbawi M, Anouti S, Guyatt G, Akl EA. Characteristics of funding of clinical trials: cross-sectional survey and proposed guidance. BMJ Open 2017; 7: e015997.

Hartling L, Hamm MP, Milne A, Vandermeer B, Santaguida PL, Ansari M, Tsertsvadze A, Hempel S, Shekelle P, Dryden DM. Testing the risk of bias tool showed low reliability between individual reviewers and across consensus assessments of reviewer pairs. Journal of Clinical Epidemiology 2013; 66: 973-981.

Higgins JPT, Altman DG, Gøtzsche PC, Jüni P, Moher D, Oxman AD, Savovic J, Schulz KF, Weeks L, Sterne JAC. The Cochrane Collaboration's tool for assessing risk of bias in randomised trials. BMJ 2011; 343: d5928.

Hopewell S, Clarke M, Stewart L, Tierney J. Time to publication for results of clinical trials. Cochrane Database of Systematic Reviews 2007; 2: MR000011.

Hopewell S, Boutron I, Altman D, Ravaud P. Incorporation of assessments of risk of bias of primary studies in systematic reviews of randomised trials: a cross-sectional study. BMJ Open 2013; 3: 8.

Jefferson T, Jones MA, Doshi P, Del Mar CB, Hama R, Thompson MJ, Onakpoya I, Heneghan CJ. Risk of bias in industry-funded oseltamivir trials: comparison of core reports versus full clinical study reports. BMJ Open 2014; 4: e005253.

Jones CW, Keil LG, Holland WC, Caughey MC, Platts-Mills TF. Comparison of registered and published outcomes in randomized controlled trials: a systematic review. BMC Medicine 2015; 13: 282.

Jørgensen L, Paludan-Muller AS, Laursen DR, Savovic J, Boutron I, Sterne JAC, Higgins JPT, Hróbjartsson A. Evaluation of the Cochrane tool for assessing risk of bias in randomized clinical trials: overview of published comments and analysis of user practice in Cochrane and non-Cochrane reviews. Systematic Reviews 2016; 5: 80.

Jüni P, Witschi A, Bloch R, Egger M. The hazards of scoring the quality of clinical trials for meta-analysis. JAMA 1999; 282: 1054-1060.

Jüni P, Altman DG, Egger M. Systematic reviews in health care: Assessing the quality of controlled clinical trials. BMJ 2001; 323: 42-46.

Kirkham JJ, Dwan KM, Altman DG, Gamble C, Dodd S, Smyth R, Williamson PR. The impact of outcome reporting bias in randomised controlled trials on a cohort of systematic reviews. BMJ 2010; 340: c365.

Li G, Abbade LPF, Nwosu I, Jin Y, Leenus A, Maaz M, Wang M, Bhatt M, Zielinski L, Sanger N, Bantoto B, Luo C, Shams I, Shahid H, Chang Y, Sun G, Mbuagbaw L, Samaan Z, Levine MAH, Adachi JD, Thabane L. A systematic review of comparisons between protocols or registrations and full reports in primary biomedical research. BMC Medical Research Methodology 2018; 18: 9.

Lo B, Field MJ, Institute of Medicine (US) Committee on Conflict of Interest in Medical Research Education and Practice. Conflict of Interest in Medical Research, Education, and Practice. Washington, D.C.: National Academies Press (US); 2009.

Lundh A, Lexchin J, Mintzes B, Schroll JB, Bero L. Industry sponsorship and research outcome. Cochrane Database of Systematic Reviews 2017; 2: MR000033.

Mann H, Djulbegovic B. Comparator bias: why comparisons must address genuine uncertainties. Journal of the Royal Society of Medicine 2013; 106: 30-33.

Marret E, Elia N, Dahl JB, McQuay HJ, Møiniche S, Moore RA, Straube S, Tramèr MR. Susceptibility to fraud in systematic reviews: lessons from the Reuben case. Anesthesiology 2009; 111: 1279-1289.

Marshall IJ, Kuiper J, Wallace BC. RobotReviewer: evaluation of a system for automatically assessing bias in clinical trials. Journal of the American Medical Informatics Association 2016; 23: 193-201.

McGauran N, Wieseler B, Kreis J, Schuler YB, Kolsch H, Kaiser T. Reporting bias in medical research - a narrative review. Trials 2010; 11: 37.